Summary

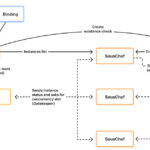

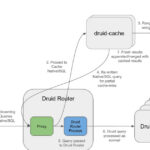

This article delves into the complexities of distributed tracing sampling in modern observability, highlighting its necessity due to the massive data volumes generated by distributed systems. It differentiates between head sampling (deciding upfront) and tail sampling (deciding after collection), explaining their respective trade-offs and implementations within OpenTelemetry. While head sampling is simpler, often random, and can be done via trace context propagation or in the observability pipeline, it struggles with localized issues. Tail sampling, though more effective at identifying 'interesting' traces, is operationally complex, requiring multi-tiered OpenTelemetry Collector architectures, stateful processing, and careful consideration of networking costs and data completeness. A critical takeaway is that sampling fundamentally compromises the accuracy of derived metrics (like RED metrics), necessitating that metrics be materialized *before* sampling discards data, often through specialized connectors in the observability pipeline, adding further architectural complexity.

Why It Matters

A technical IT operations leader should read this article because it provides a comprehensive and nuanced understanding of distributed tracing sampling, a critical component for managing observability costs and effectiveness in modern, distributed environments. The article illuminates the significant operational complexities and trade-offs associated with different sampling strategies, particularly tail sampling, which can directly impact infrastructure design, resource allocation, and troubleshooting efforts. Understanding these challenges, especially regarding metric accuracy and the need for pre-sampling metric materialization, is crucial for making informed decisions about observability pipeline architecture, vendor selection, and ensuring that monitoring data remains reliable for SLOs, alerting, and performance analysis, ultimately leading to more efficient and resilient systems.